RAG and GraphRAG: Shared Mission & Different Architectures

Table of Content

Quick Answer (TL;DR)

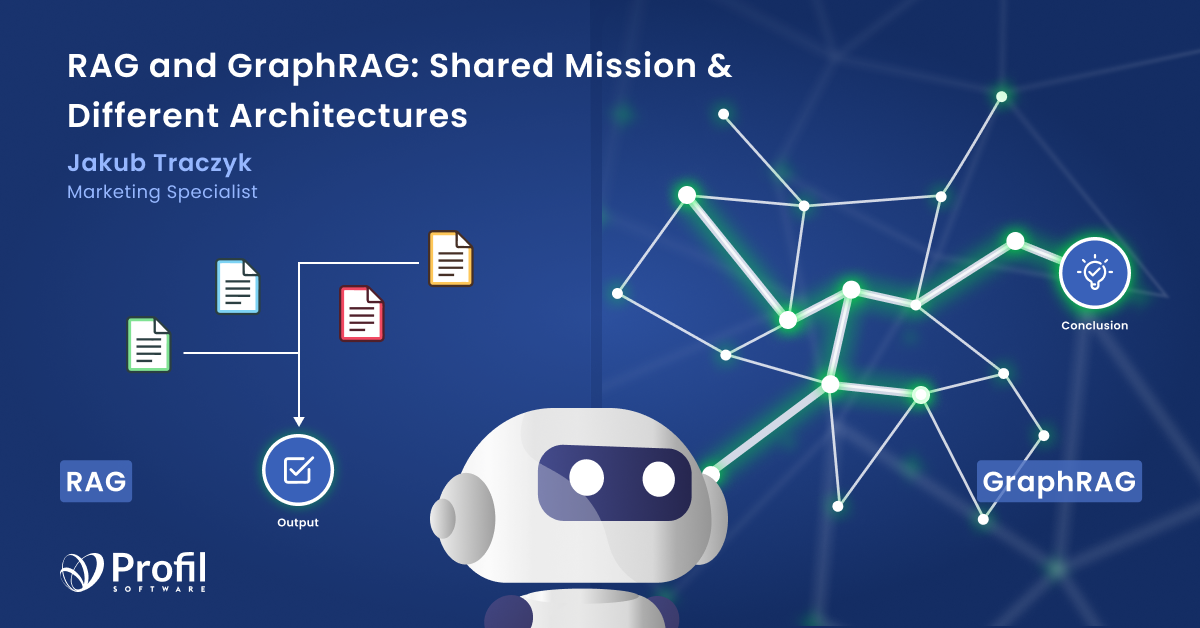

Moving from basic AI to advanced reasoning needs a better way to handle external knowledge. Standard search tools find relevant content, but they often miss the deep links hidden in large datasets. To improve the overall quality of the final response, you generally have two main choices:

- Traditional RAG: Best for simple, independent facts (like FAQs). It is fast and low-cost but lacks the reasoning needed for complex natural language processing tasks.

- GraphRAG: Uses a graph representation to link facts together. This is essential for difficult "multi-hop" questions where the answer depends on connected information.

Proper LLM integration with these tools allows you to use community summaries for better data insights, which are short shared explanations created from multiple data sources that help the model understand context more effectively.

You can find a full overview and additional resources below to help you choose the best option.

The Next Chapter in AI Reasoning

The focus in AI has shifted from simply using large language models to connecting them with reliable, company-specific data. In this context, using specialized, industry-specific, or private data in RAG and GraphRAG systems has become essential. These types of specially prepared datasets are often referred to as domain specific data, which are crucial for improving the accuracy and relevance of responses in specialized fields. This is because generic datasets aren’t able to manage complex questions or deliver precise answers.

This approach, called retrieval-augmented generation (RAG), has become the standard way to reduce hallucinations and keep AI answers accurate. That’s where the GraphRag approach comes in, built to handle the kind of complexity that standard solutions struggle with. While classic RAG works well for straightforward searches, GraphRAG adds a structural layer with a community hierarchy that helps AI spot connections between pieces of information. Because those links are built on clear, structured facts, the risk of AI making things up drops immediately.

The 2024 Microsoft Breakthrough

It is important to highlight that the term GraphRAG was formally introduced and popularized by Microsoft Research in 2024. In their paper, they explained how combining models with knowledge graphs allows for global summarization. By using community summaries, which are explanations made from groups of related data, they showed how AI can understand the big picture instead of just small, separate facts.

The Evolution of AI: Why RAG Changes Everything

Retrieval-Augmented Generation is a modern approach that improves LLMs by connecting them to external data sources. Instead of relying on their fixed knowledge, RAG systems pull relevant information from up-to-date databases, document repositories, or other knowledge stores whenever a question is asked.

These systems use semantic search to find text that is closely related in meaning to the user’s query. This integration allows LLMs to generate answers that are not only more accurate, but also based on the latest and most relevant information. That is why RAG has become a popular choice for question-answering, summarization, and intelligent chatbots across many industries.

Find out how to build AI agents with LangGraph.

Shared Purpose: Giving LLMs Reliable Context

Despite their technical differences, RAG and GraphRAG share the same key goal: providing LLMs with factual and reliable results. This ensures that the model can generate a relevant answer that closely aligns with the user’s intent by using clear and accurate information.

LLMs are not databases, but reasoning engines with a knowledge cut-off, which means their information stops at a certain point in time. To prevent them from making things up, both techniques pull relevant facts from your private documents and external sources, ensuring the information reflects real world information. GraphRAG takes this a step further by including more complete background information, which helps the model deliver answers that are more precise and trustworthy.

Common features include

Traditional RAG: Proven Strengths And Clear Limits

Traditional RAG relies on vector search. It works by breaking your documents into text chunks, such as short paragraphs, and translating them into a set of numbers that capture their core meaning. When you ask a question, the system does the same: it converts your query into numbers to find the pieces of text that are the best match. Instead of just hunting for keywords, it finds the information that actually shares the same meaning as your question.

This approach works well for simple user questions such as ‘What is our vacation policy?’ or ‘What are the working hours?’ where the system just needs to find the right paragraph and return the answer. Everything works almost perfectly until the questions get more complex.

There are three main reasons why

Knowledge graph: The Missing Link in RAG Understanding

A knowledge graph is a way of organizing information that shows how different things, like people, companies, or products, are connected. The data is stored as points and lines between them, making relationships easy to see. This kind of graph can be kept in a graph database, such as Neo4j, which helps manage and find the data.

In a RAG system, a knowledge graph works together with large language models to make searches smarter, provide better context, and show how different pieces of data relate to each other. This combination goes beyond what vector-based systems can do and makes it easier to explore real connections and gain deeper insights.

Why GraphRAG Solves Complex Problems with Structured and Unstructured Data

GraphRAG enables more accurate answers by combining the strengths of LLMs with the structure of knowledge graphs. It represents important ideas or entities as points and connects them through clear and direct relationships. This method keeps the original meaning and context instead of splitting information into small, unrelated pieces.

As a result, the system can understand how different parts of information fit together and provide more accurate and intelligent answers by focusing on relevant entities during the retrieval process. GraphRAG also allows for the combination of structured data from knowledge graphs with unstructured data from input documents, enhancing the depth and quality of data searching.

Some Advantages of this approach:

- Breaking the multi-hop barrier: Breaking the multi-hop barrier by connecting related pieces of information helps the system find links between facts and understand complex questions more accurately.

- Understanding deep relationships: Achieves about 87% accuracy by following logical connections between relevant nodes and related concepts.

- Reducing hallucinations: By analyzing multiple pieces of information and identifying links between data, GraphRAG helps reduce the number of incorrect answers.

But are there really no drawbacks to this method?

Performance and cost comparison

Compared to the standard RAG method, GraphRAG performs much better in tasks that require understanding connections and complex reasoning. It achieves significantly higher accuracy, especially when analyzing relationships between pieces of information or handling multi-step questions. However, despite being more advanced and precise, GraphRAG does have some drawbacks. Anyone planning to use it should keep in mind that it takes more time to generate answers and involves higher costs due to the knowledge extraction process, so users should be cautious about potential expenses and losses.

Real-World Examples: GraphRAG in Action

Fraud detection is a great example of how GraphRAG can help us better understand large, complex datasets. In this area, it's important to map clear connections between things like transactions, accounts, and people, because that's how you spot suspicious patterns that would otherwise be easy to miss. Turning financial data into a knowledge graph allows GraphRAG to uncover hidden links that may reveal fraud. The graph can connect accounts, transactions, and people, helping to expose unusual behavior.

This method not only makes fraud detection more accurate but also gives clear and easy to understand results that link directly to the data behind them. GraphRAG helps organizations find and stop suspicious activity faster and more effectively, even when the data is large, complex, and constantly changing.

Implementing GraphRAG: Key Steps and Considerations

Creating an effective GraphRAG system requires a few clear steps to make sure it can handle complex questions and provide meaningful results. The process starts with building a knowledge graph suited to your field by defining key concepts, their connections, and essential details. Next, you select a database that stores large volumes of information efficiently and allows quick access when needed. It should also link smoothly with other data sources to keep the knowledge base fresh and accurate.

Finally, it is important to check and improve your system. This means watching if the answers are correct and make sense, making the connection between the language model and the database better, and updating the knowledge graph when new information appears. By following these steps and considering the unique requirements of your case, you can implement a GraphRAG system that delivers reliable, high-quality answers to even the most challenging queries.

What’s the best option for you?

When deciding between Traditional RAG and GraphRAG for your AI project, it's crucial to evaluate your specific data characteristics, performance needs, and business priorities. Each approach has different strengths that make them better suited for different scenarios.

Below, we outline clear guidelines to help you determine which architecture aligns best with your use case, ensuring you choose the right tool to deliver accurate, efficient, and reliable AI-powered answers.

Finding the right balance between speed, cost, and depth is the key to a successful AI implementation.

Let’s meet our experts to see how we can help you optimize your processes.